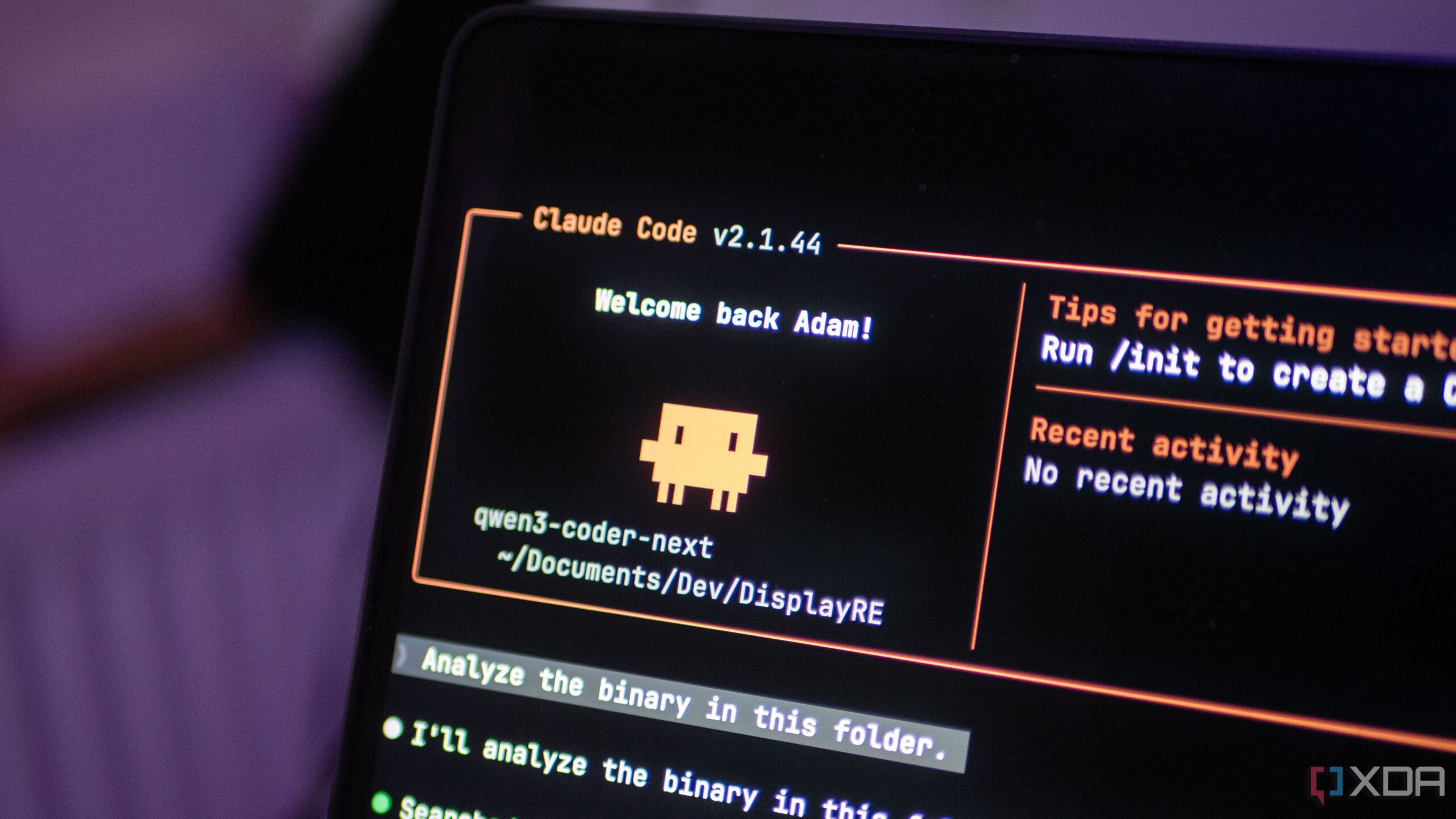

UPDATE: A revolutionary local LLM setup is transforming coding workflows! Developers are now turning to Qwen3-Coder-Next running on the Lenovo ThinkStation PGX, marking a significant shift in the use of local models for serious coding tasks.

This groundbreaking model, equipped with 80 billion parameters and an ultra-sparse architecture, shows promise in handling real coding tasks with unprecedented speed and efficiency. Users report it can plan multi-step tasks, call tools, and edit files seamlessly. This is a game changer in local LLM technology, and it’s happening right now!

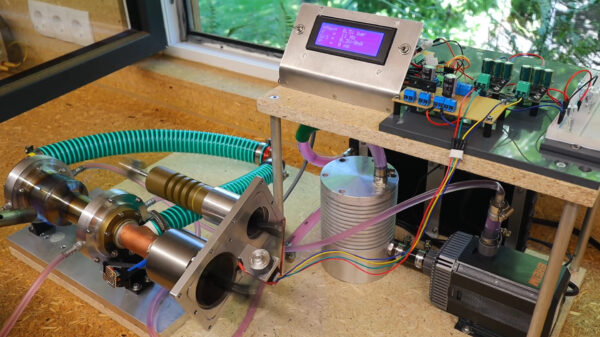

The shift has been catalyzed by the superior hardware of the Lenovo ThinkStation PGX, featuring 128GB of unified LPDDR5x memory shared between CPU and GPU, which eliminates PCIe bottlenecks. Users can now run the demanding Qwen3-Coder-Next model effectively without significant slowdowns, setting a new standard for local LLM capabilities.

For those relying on local models, the implications are profound. As one user noted, the ability to process coding tasks directly on local hardware means no more cloud dependency that can impose usage limits and latency. This is especially critical for professionals engaged in sensitive coding and security research, as it mitigates privacy concerns associated with cloud-based solutions.

The setup process is straightforward, allowing developers to get started in minutes. A simple Docker command pulls the necessary components from NVIDIA’s registry, enabling Claude Code to interact seamlessly with the Qwen3 model. Users have praised this ease of use, making it accessible for a wider range of developers.

However, it’s essential to note that while Qwen3-Coder-Next excels in many areas, it does have limitations compared to leading cloud models, particularly in complex reasoning tasks. Users must remain vigilant about its outputs, especially in security-sensitive work. Yet, for day-to-day coding, it offers a remarkably efficient solution that has impressed early adopters.

The cost of entry is significant, with the complete setup priced at over $3,000. But for professionals in coding and security, this investment is rapidly proving to be worthwhile. Users are reporting that tasks which previously took hours can now be completed in mere minutes, significantly enhancing productivity.

As this technology continues to evolve, developers are encouraged to explore the potential of Qwen3-Coder-Next. The feedback from the community has been overwhelmingly positive, with many users asserting that this model brings a genuine utility to local setups that they’ve never experienced before.

As we move into the future of coding, the implications of this development are substantial. Local LLMs are no longer just a privacy consideration; they are becoming practical tools for enhancing productivity and effectiveness in coding tasks. Expect more updates as this technology continues to develop and gain traction in the coding community.

Stay tuned for more as we monitor how local LLMs like Qwen3-Coder-Next are reshaping the coding landscape!