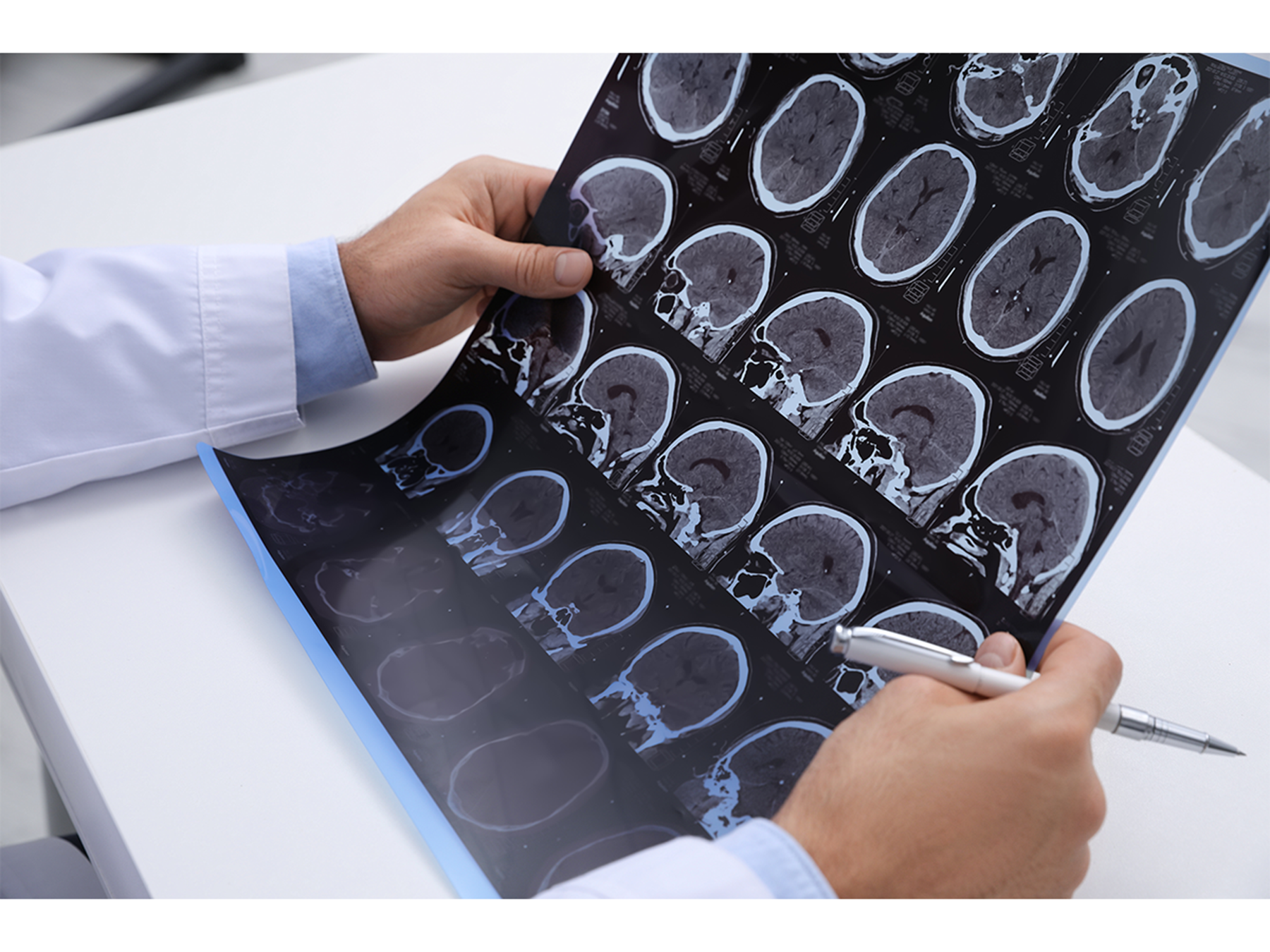

Artificial intelligence (AI) is reshaping the medical landscape, particularly regarding legal liability when patients experience harm. Research led by Michael Bruno, a professor at Penn State College of Medicine, indicates that the integration of AI into clinical workflows significantly impacts perceptions of physician responsibility. The study, published on March 10, 2023, in the journal Nature Health, highlights how these perceptions can influence legal outcomes in malpractice cases.

Bruno collaborated with researchers from Brown University and Seton Hall University School of Law to explore how mock jurors evaluate a radiologist’s liability based on their interaction with AI. The study presented jurors with a hypothetical scenario involving a patient who suffered irreversible brain damage due to a radiologist’s failure to detect a brain bleed in a CT scan, despite AI correctly flagging the scan as abnormal. The findings showed that the likelihood of jurors siding with the plaintiff increased by nearly 50% when the radiologist reviewed the scan only once after AI feedback, compared to when they reviewed it twice—once prior to receiving AI input and again after.

The research revealed that nearly 75% of jurors deemed the radiologist negligent in the single-review scenario. This percentage decreased to 53% when the radiologist conducted two reviews. These results suggest that modifying how radiologists engage with AI during their diagnostic processes could potentially reduce their legal risks.

Implications for Healthcare Stakeholders

The findings raise important considerations for stakeholders in the healthcare industry. Brian Sheppard, a co-author and professor at Seton Hall University School of Law, emphasized that this information is crucial for decision-makers contemplating the adoption of AI technologies. Stakeholders need to weigh the potential benefits against the risks of legal liability, particularly when deciding on workflows that involve AI diagnostics.

Bruno previously organized a research summit focused on “Human Factors and Artificial Intelligence in Healthcare,” where experts examined the implications of AI for clinical practice. The summit aimed to establish research priorities for the evolving landscape of Human-AI collaboration in health care.

The choice to focus the study on a radiology case stems from the advanced integration of AI in this field compared to others in medicine. The research team sought to understand juror perceptions in a context where AI is commonly utilized, thereby creating a plausible scenario for examination.

Challenges and Considerations

The researchers acknowledged that changes in radiologist workflow, such as the frequency of image reviews, could mitigate legal exposure. However, these modifications come with their own challenges. Grayson Baird, an associate professor at Brown University, noted that biases may discourage radiologists from questioning AI outputs due to the potential repercussions of being wrong. This dynamic can lead to increased healthcare costs as patients may require additional follow-up care or testing, thus impacting overall patient experience.

While the study did not delve deeply into the reasons behind the jurors’ perceptions, it underscored that the context of AI usage plays a critical role in determining fault. Previous research indicated that jurors were less likely to hold radiologists liable when their interpretations aligned with those of the AI compared to when they disagreed. Additionally, jurors were more forgiving when AI error rates were disclosed.

The rapid evolution of AI technology necessitates attention to how perceptions of liability are influenced in clinical settings. Michael Bernstein, an associate professor at Brown University, highlighted the importance of understanding these dynamics as AI continues to integrate into healthcare, stating that how people perceive AI will influence future legal and medical practices.

As the healthcare industry navigates the complexities of AI integration, the findings from this study serve as a crucial reminder of the potential implications for legal responsibility and patient care. The evolving relationship between AI technology and medical liability will require ongoing research and dialogue among stakeholders to ensure that patient safety and quality care remain the priority.