The landscape of artificial intelligence is transforming as local large language models (LLMs) gain traction, moving beyond their initial perception as mere novelties. While early attempts at running AI models on personal devices often resulted in subpar performance compared to cloud-based services from companies like OpenAI and Google, recent advancements have made local LLMs genuinely useful for a variety of tasks.

Today, users can harness the power of local AI models to tackle everyday challenges while maintaining greater control over their data. This shift reflects a growing recognition of the importance of privacy, latency, and cost—factors that resonate deeply with many individuals. Although local models do not entirely replace their cloud-based counterparts, they provide valuable solutions for specific applications.

Practical Applications of Local AI Models

Local LLMs excel at data processing tasks that many find cumbersome. Models such as gpt-oss-20b, gemma-27b, and seed-oss-36b are adept at extracting information from various formats, including PDFs, and converting unstructured data into organized tables. These capabilities enable users to efficiently manage their personal data without sending sensitive information to external servers.

For instance, using applications like PaperlessNGX, individuals can store private documents securely while relying on local AI models for text extraction and organization. This approach alleviates concerns about data privacy that often accompany cloud-based solutions. Moreover, local models are not only suitable for mundane tasks but also perform effectively in proofreading and simple code corrections, offering alternatives to popular tools like Grammarly without the associated privacy risks.

Enhanced Voice Assistants and User Control

Voice assistants powered by local LLMs are another area where users find significant benefits. Many individuals express distrust towards major voice assistants, fearing potential data collection by companies like Amazon and Google. By deploying a local AI-powered assistant, users can enjoy a more trustworthy experience while executing commands quickly and efficiently.

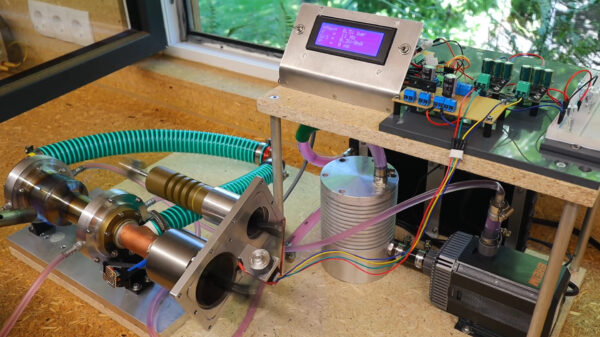

An example of this innovation is a GLaDOS-themed voice assistant developed using a personal voice processing pipeline and a local LLM. Integrated with Home Assistant, it performs tasks such as home automation without latency issues associated with cloud processing. This setup allows users to maintain control of their voice data and enjoy a customizable experience tailored to their preferences.

While local LLMs demonstrate impressive capabilities, they are not without limitations. Tasks requiring extensive reasoning or broad knowledge still favor cloud-based models. Users should not expect local systems to rival advanced models like Claude or future versions of GPT, such as GPT-5. Instead, the value lies in the local models’ ability to handle specific tasks efficiently while offering complete ownership of data and workflow.

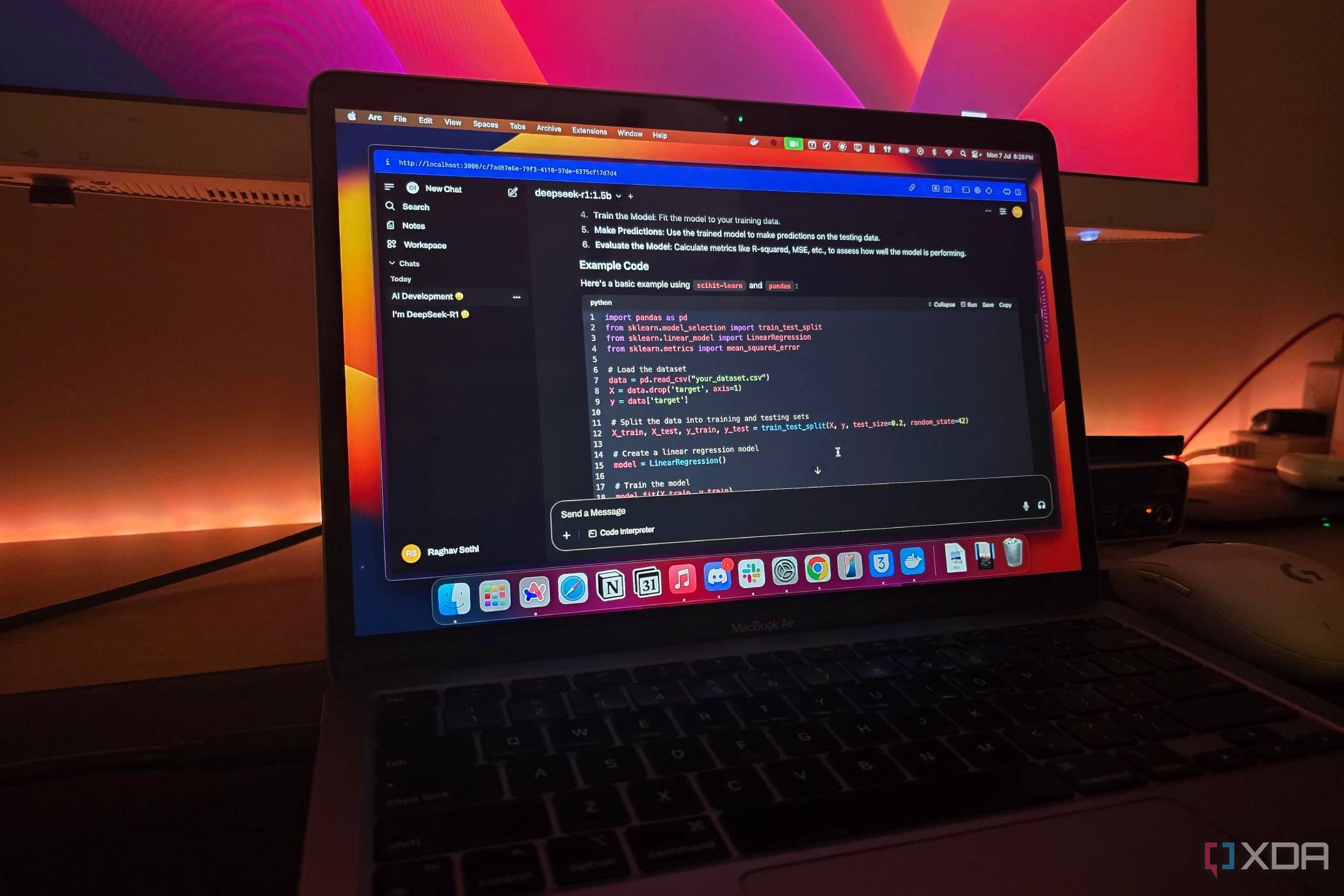

In an era where digital privacy is paramount, local LLMs provide a compelling alternative for users seeking more control. With reasonable hardware requirements and mature tooling, embarking on using local AI models is increasingly accessible. Tools like LM Studio and Kobaldcpp empower users to leverage AI capabilities without needing extensive technical expertise.

The evolution of local LLMs marks a significant shift in how individuals interact with technology. By prioritizing privacy, control, and reliability, these models have established themselves as useful tools for a wide range of everyday tasks. As the technology continues to mature, local LLMs may well become an integral part of many users’ digital lives.