Meta has announced a significant policy change, restricting access to its AI characters for teenagers. The decision reflects growing concerns about the impact of AI technology on young users’ mental health. The company plans to halt access until it can develop improved versions of its AI offerings.

In a blog post released on October 20, 2023, Meta stated, “Starting in the coming weeks, teens will no longer be able to access AI characters across our apps until the updated experience is ready.” This policy will affect users who have provided a birth date indicating they are underage, as well as those flagged by the company’s age prediction technology.

This announcement follows Meta’s earlier commitment to introduce parental supervision tools for managing children’s interactions with AI characters. These tools, intended to allow parents to monitor and potentially restrict their children’s access, were originally promised for early 2023. However, the launch has been delayed, leading to the current decision to suspend teen access entirely.

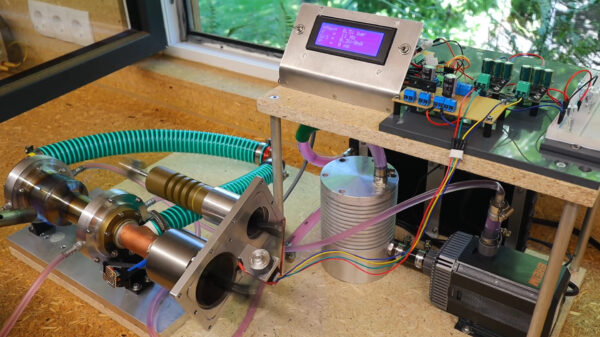

The company emphasizes that it is developing a new version of its AI characters aimed at enhancing user experience. Until these updates are ready, the temporary suspension for teenagers will remain in effect.

Concerns regarding the use of AI by teenagers have intensified recently, particularly regarding the phenomenon referred to as AI psychosis. This term describes concerning mental health issues that can arise from interactions with AI, especially when responses are overly supportive or sycophantic. Disturbingly, multiple cases involving teenagers have ended tragically, highlighting the urgent need for safe engagement practices.

A survey revealed that one in five high school students in the United States report having had a romantic relationship with an AI, underscoring the popularity and potential risks associated with these technologies.

Meta faces heightened scrutiny over its AI interactions, especially after an internal document surfaced that allowed minors to engage in “sensual” conversations with its chatbots. Reports of inappropriate exchanges involving AI characters based on celebrities, such as John Cena, have raised alarm among parents and child advocacy groups. These incidents have resulted in calls for stricter regulations on AI platforms.

Meta is not alone in facing backlash. Character.AI, another platform providing AI companions, also restricted access for minors in October 2023 following lawsuits from families alleging that its chatbots encouraged harmful behavior in children.

The decision to limit access for teenagers signals a cautious approach from Meta as it navigates the complexities of AI technology and its implications for younger users. The company’s ongoing commitment to developing safer AI experiences remains to be seen, but the current pause reflects a necessary response to the pressing issues surrounding mental health and technology in today’s society.