The relationship between the prominent AI firm Anthropic and the U.S. government reached a critical juncture on February 27, 2026, when President Donald J. Trump announced a ban on the use of Anthropic’s technology by federal agencies. This decision follows prolonged negotiations over a military contract and the Pentagon’s demand for unrestricted access to Anthropic’s AI models, specifically the Claude family. In a significant escalation, Secretary of War Pete Hegseth designated Anthropic as a “Supply-Chain Risk to National Security,” effectively terminating a $200 million military contract and imposing a six-month deadline for its removal from Pentagon systems.

Despite this setback, Anthropic’s business momentum has been remarkable. The company’s Claude Code service has rapidly grown into a division generating over $2.5 billion in annual recurring revenue. Earlier this month, Anthropic announced a staggering $30 billion Series G funding round, resulting in a valuation of $380 billion. The release of specific plugins and skills tailored for various industries has driven significant productivity gains for numerous companies, including Salesforce, Spotify, and Thomson Reuters.

The Pentagon’s abrupt decision raises questions about the rationale behind labeling Anthropic a “Supply-Chain Risk.” The conflict arose from a fundamental disagreement regarding the use of AI models for mass surveillance and fully autonomous weapon systems. Anthropic’s CEO, Dario Amodei, has maintained that certain ethical guardrails are necessary to avoid unintended consequences. Hegseth criticized this stance as “arrogance and betrayal,” arguing that unrestricted access is essential for military operations.

As a direct consequence of the ban, the Pentagon has instructed all contractors and partners to cease commercial activities with Anthropic. This leaves a vacuum that competitors are eager to fill. OpenAI has already announced a partnership with the Pentagon, while Elon Musk’s xAI has signed agreements allowing its AI models to be employed in classified systems, adhering to the Pentagon’s demands for “all lawful use.”

Implications for Enterprises

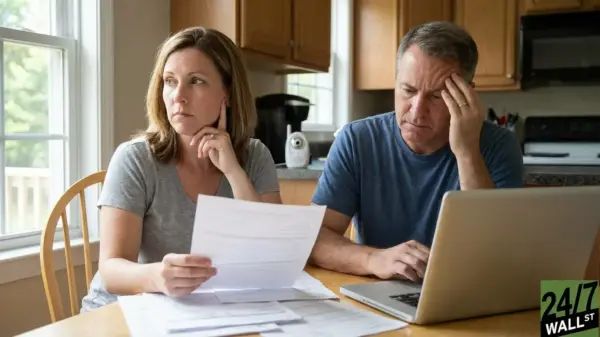

The fallout from the Anthropic ban presents a critical lesson for businesses reliant on AI technologies. The situation underscores the importance of model interoperability, particularly for those engaged in sectors that may require compliance with governmental standards. Enterprises should avoid being locked into a single provider’s API, as this may hinder flexibility and adaptability in a rapidly changing market environment.

Organizations are advised to implement a strategy that includes a “warm standby” model, enabling them to switch between different AI providers, such as Claude, GPT-4o, and Gemini 1.5 Pro, without experiencing significant performance degradation. Failing to establish such a framework could lead to operational disruptions if a primary provider faces sudden restrictions.

Diversifying AI Supply Chains

In light of the recent upheaval, the AI market is experiencing fragmentation that presents both challenges and opportunities. While major U.S. firms scramble to align with the Pentagon’s preferences, alternatives are emerging. Google’s Gemini saw a stock increase following the announcement, and OpenAI’s substantial investment from Amazon signals a shift in power dynamics within the industry.

Some companies are already exploring lower-cost options, such as Alibaba’s open-source models for specific functions, highlighting the need for flexibility amid geopolitical considerations. Additionally, enterprises are increasingly turning to in-house hosting solutions, utilizing domestic models like OpenAI’s GPT-OSS, IBM’s Granite, and others. This shift offers a robust insurance policy against potential external restrictions.

The ever-evolving landscape necessitates that businesses conduct thorough due diligence to ensure compliance with federal standards. Companies aiming to maintain contracts with governmental agencies must be prepared to certify that their products do not rely on any prohibited model providers. The need for strategic redundancy has never been more apparent, as the AI sector grapples with the implications of defense procurement and executive authority.

In conclusion, the recent developments surrounding Anthropic serve as a stark reminder of the need for enterprises to diversify their AI suppliers and build systems that facilitate rapid adaptation. Navigating the complexities of this environment will require forward-thinking strategies that prioritize flexibility, redundancy, and compliance. The imperative for model interoperability has never been clearer, positioning it as a critical component of modern enterprise infrastructure.